OpenClaw might not be how you build an agent for your business

2026-04-01

by Uri Walevski

There's a pattern emerging in the agent space. Give the agent a computer. A full operating system, a terminal, a browser. Let it do whatever it wants. OpenClaw, NemoClaw, and to some extent Claude Code and similar coding agents all follow this model. The agent is the computer, or at least it lives on one.

It's a compelling demo. You watch an agent open a browser, navigate a website, fill out forms, write code, run it. Feels like the future. But if you're building a product that serves actual users, this architecture will hurt you in ways that aren't obvious from the demo.

Most agent work doesn't need a computer

Think about what an agent actually does most of the time. It receives a message, thinks about it, calls an LLM, maybe hits a few APIs, sends a response. That's it. No filesystem, no terminal, no browser needed. For a customer support bot, a scheduling assistant, a sales agent, the compute requirement is basically zero. It's text in, text out, with some API calls in between.

Running a full VM for this is like renting a warehouse to store a single envelope.

Most business agents work with a closed set of tools. They call APIs, query databases, create records. These are predefined operations. The agent isn't writing code on the fly. It doesn't need a filesystem or a shell. It needs to call your tools with the right parameters. A library can do that. A container with a full OS is overkill.

When an agent does need a computer, it's for specific tasks. Clone a repo, run tests, execute a script, process a file. That's maybe 5% of what most agents do. The other 95% is pure reasoning and coordination.

One machine, one user

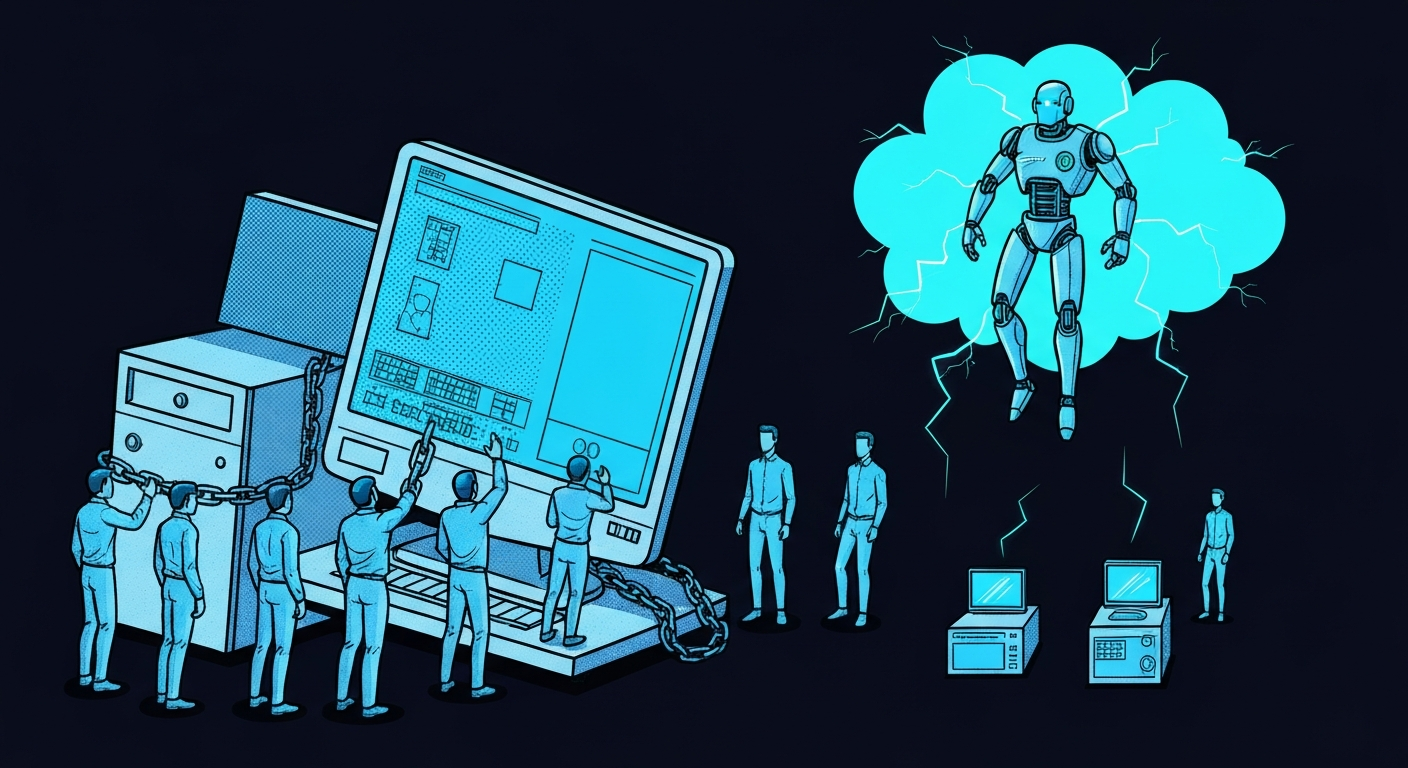

Coding agents like Claude Code are built for a developer sitting at their terminal. One human, one machine, one session. The agent uses your filesystem, your installed tools, your credentials. It works because you're watching it and you can ctrl-c if it does something weird.

Try to make this serve 100 users and you hit a wall immediately. Each user needs isolation. You can't have two agents writing to the same filesystem, stepping on each other's processes, sharing environment variables. So you need a VM per user. Or a container per user. Either way, you're now running infrastructure that scales linearly with your user count, regardless of whether those users are actually doing anything that needs a computer.

Most of the time, they're not. They're sending messages and getting responses. But you're still paying for a running VM.

You can try to containerize this, spinning up instances on demand. But the core problem remains: you're running a full container per agent session. A hundred concurrent users means a hundred containers, most of them idle, all of them costing money.

The data isolation problem

This is where things get serious. An agent with access to a filesystem can write data. It will write data. Intermediate results, cached responses, temporary files. If two customers share infrastructure, you have a data isolation problem.

With an agent-on-a-computer model, proving data can't leak between customers is extremely hard. The agent has write access to an entire filesystem. You'd need to audit every possible path the agent could take, every file it could create, every environment variable it could set. In regulated industries, that's a compliance nightmare.

With a stateless agent that calls APIs through a controlled gateway, the isolation is structural. The agent doesn't have a filesystem. There's nowhere for data to leak to.

Fragility compounds

A laptop isn't a production environment. Neither is a VM that an agent has root access to.

Agents install things. They modify configs. They write files in unexpected places. On a single developer's machine, this is manageable because the developer notices and fixes things. In a multi-user product, there's no human watching each VM. The agent corrupts its own environment and nobody finds out until a user reports that things stopped working.

Then there's the gap between a developer tool and production infrastructure. These frameworks are built for a developer's laptop. Real production environments have network policies, proxy requirements, egress controls, security constraints. Things that work fine locally break silently when deployed behind corporate firewalls or in locked-down clusters. Each of these is a small fix on its own, but they multiply fast when you're running agent infrastructure inside a controlled environment.

The failure modes compound. The agent installs a package that conflicts with something else. A system update breaks a dependency. The agent fills up the disk with logs. Each of these is individually rare, but across hundreds of instances, something is always broken.

The harness isn't the moat

OpenClaw is a harness. It gives an LLM a computer to work with. That's useful, but it's not where the value is in your product. The value is in your domain knowledge, your tooling, your data pipeline, the way you organize information for the LLM to process without hallucinating. That's the hard part.

If the harness brings operational complexity that distracts from building the actual product, it's working against you. A good agent framework should disappear into the background, not become the primary infrastructure challenge.

Separation of control and compute

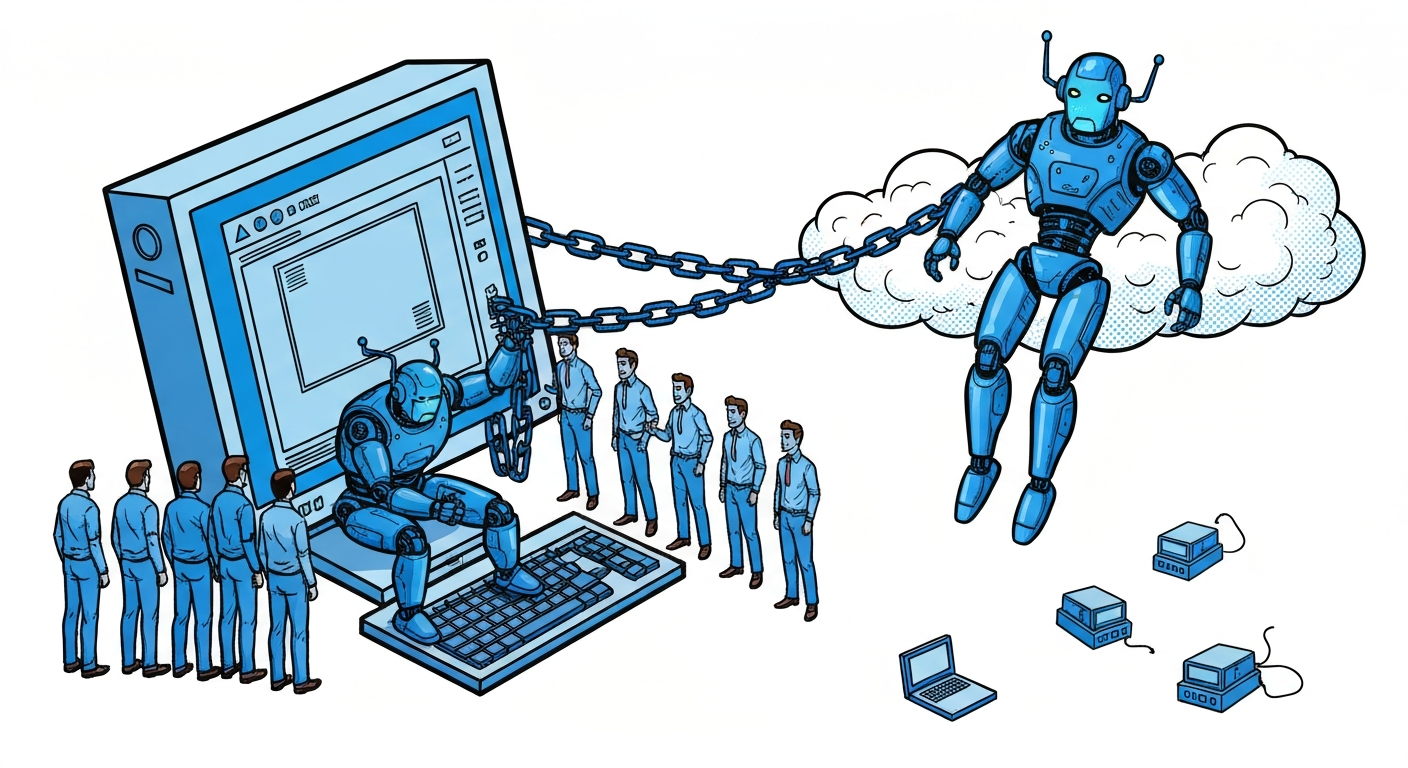

The alternative is to keep the agent in a controlled, managed environment and give it access to a VM when it needs one. The agent runs on your infrastructure, stateless and scalable. When a task requires code execution or filesystem access, a VM spins up, the agent uses it, and the VM goes away or idles until the next task.

This means you're paying for compute only when compute is happening. The agent can serve thousands of conversations simultaneously because most of them don't need a machine. And when they do, the VM is isolated, ephemeral, and disposable. If it gets corrupted, spin up a new one.

More importantly, there's a control plane between the agent and the computer. The agent can't accidentally nuke its own runtime. It can't leak credentials that live in its environment because the credentials aren't in its environment. The orchestration layer can enforce policies, rate limits, and access controls that the agent can't circumvent even if it tries.

This is how we built prompt2bot. The agent lives in a managed environment. It gets VMs when it needs them. Secrets are injected at the network layer, never visible to the agent or the machine. The result is agents that can do real work, with real credentials, without the operational nightmare of managing a fleet of agent-owned computers.

The right tool for the right job

OpenClaw and coding agents are useful for what they're designed for: a developer working interactively with an AI on their own machine. That's a legitimate use case. But it's not the same use case as building an agent product.

If you're building something that serves users, you need an architecture where the agent is a service, not a process running on a computer. Where compute is a resource you allocate, not a permanent cost per user. Where the control plane is separate from the execution plane.

The demo where an agent uses a full computer is impressive. The production system where an agent is a full computer is a liability.

← All posts