Use prompt2bot as Your Startup's Frontend

2026-03-23

by Uri Walevski

You're technical. You have Claude Code. You could build an AI agent yourself. Clone a repo, set up a Telegram webhook, wire up some tool calls, deploy it on a VPS. How hard can it be?

Harder than you think. Not the first demo, that takes a day. The production-quality agent that doesn't embarrass you in front of paying users, that takes months.

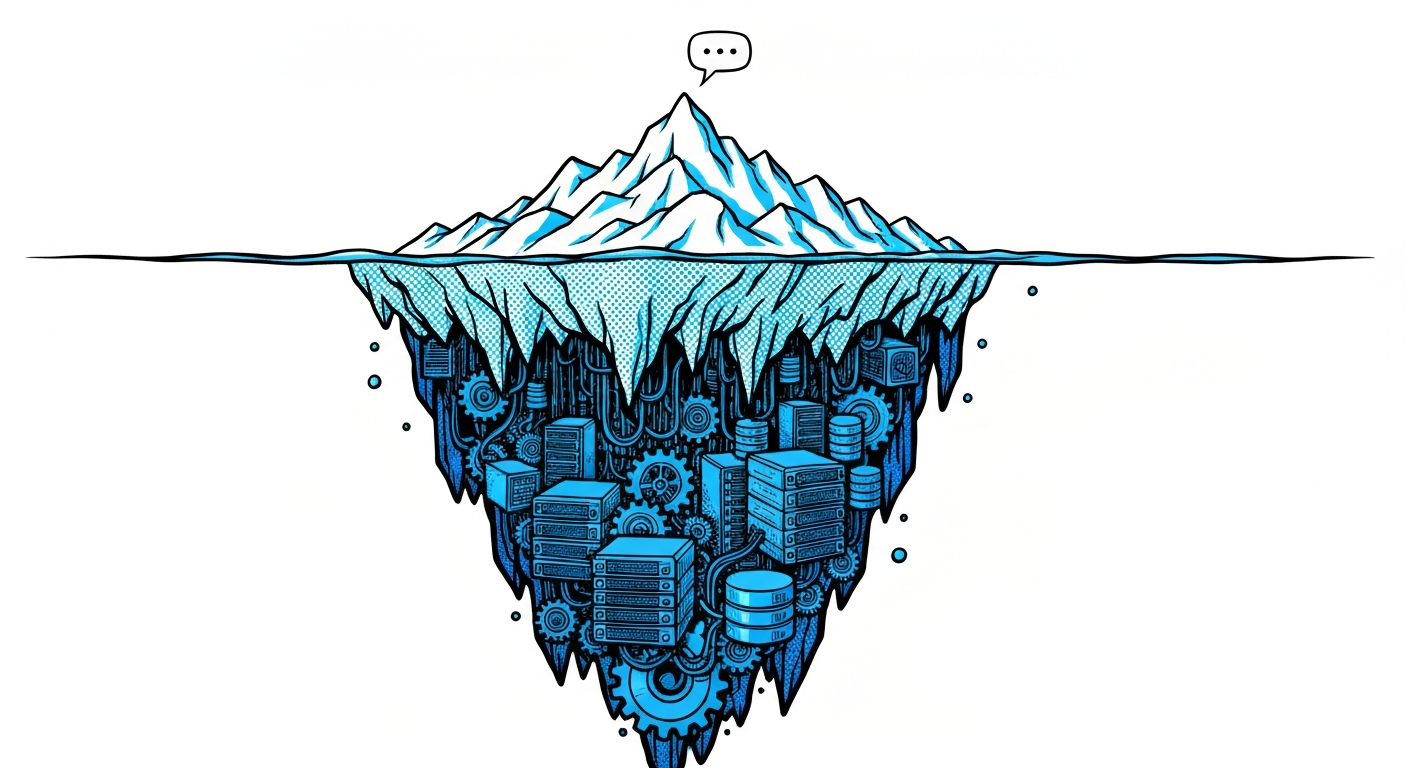

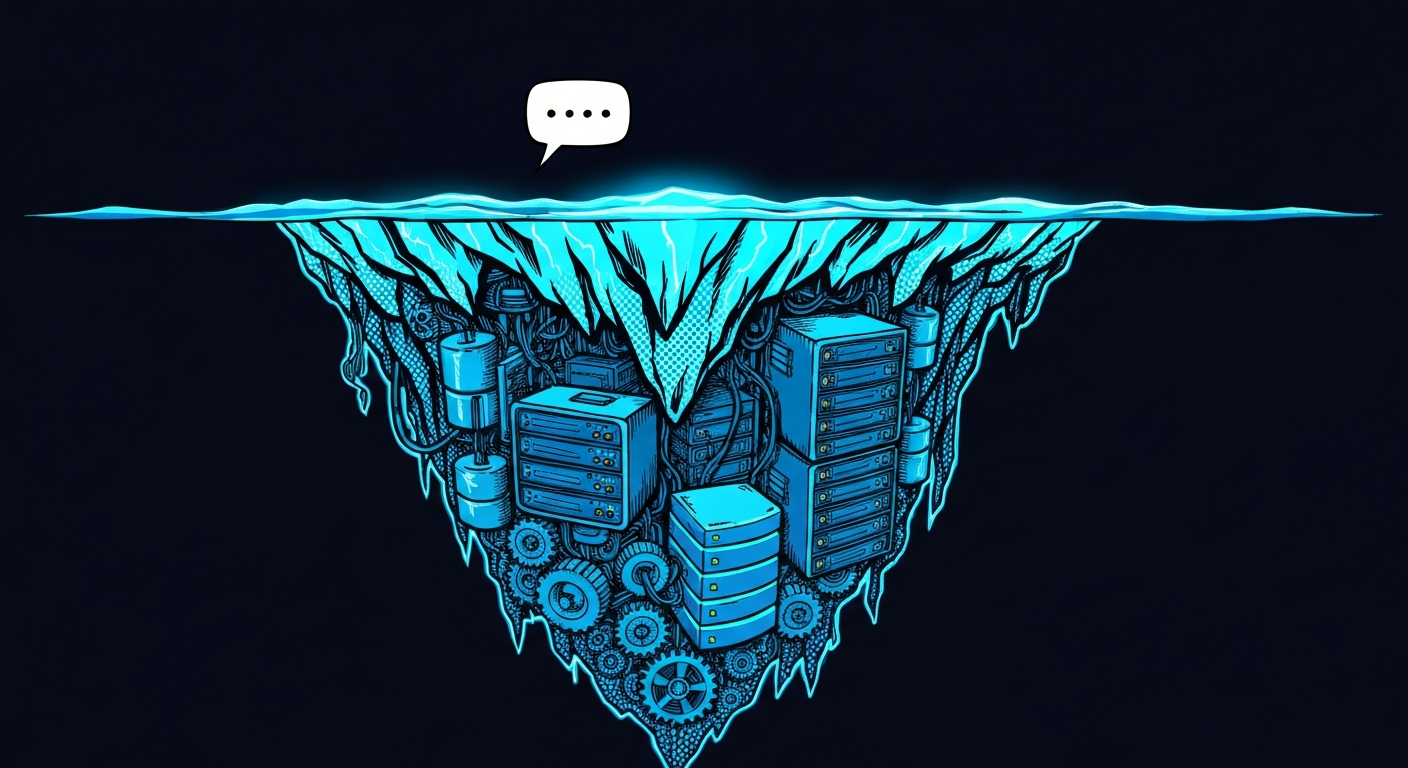

The iceberg

Getting a basic chatbot to respond is the easy part. It's maybe 5% of the work. Below the surface is everything else. The amount of implementation detail required for a production agent is enormous. Even if you use Claude Code to vibe-code the whole thing, you are still looking at months of vibe-coding just to get through the backlog of edge cases and integrations.

The agent harness is months of iteration. Writing the initial prompt is an afternoon. Getting the agent to behave consistently across thousands of edge cases requires a harness. You need anti-hallucination layers, subagents for complex tasks, context summarization so you don't blow the context window, and logic to prevent the bot from getting stuck in tool-calling loops. Every fix introduces a new regression somewhere else. You end up building evaluation frameworks just to keep track of what's broken.

The chat UI and transport. A basic text box is easy. A professional chat interface needs streaming responses so the user doesn't wait 10 seconds for a reply, typing indicators, read receipts, and state synchronization across multiple tabs. It needs to render markdown, code blocks, and images correctly. It needs a robust websocket or SSE layer that handles poor network connections gracefully.

Chasing the latest models. The LLM landscape changes weekly. Switching from GPT-4 to Claude 3.5 Sonnet to whatever comes next isn't just a matter of changing a model string. Every provider has API peculiarities, different system prompt handling, varying tool-calling behaviors, context window quirks, and changing rate limits. Maintaining the abstraction layer over these models is a full-time job.

WhatsApp integration is its own project. The Business API requires Meta approval, message templates, rate limits. Or you go through WhatsApp Web, which means managing persistent sessions that disconnect, reconnect logic, container isolation, proxy rotation. Either path is weeks of work before your bot sends its first message.

Voice and video messages. Users will send them. Your bot needs to transcribe audio, understand images, handle documents. Each media type is a separate integration with its own failure modes.

File handling and RAG. Users send PDFs, screenshots, spreadsheets. They want the bot to "read this document and answer questions about it." That's a RAG pipeline: parsing, chunking, embedding, retrieval. Another month.

VMs and code execution. If your agent needs to do anything beyond API calls, it needs a sandbox. Setting up isolated VMs, managing Docker containers, handling timeouts, cleaning up resources, that's infrastructure work.

Remote tools with authentication. Your agent calls your backend. How do you verify which user made the request? How do you prevent the LLM from fabricating user identities? You need cryptographic signing, context injection, replay protection. This is security engineering.

Secret management. Your agent needs API keys. If they're in the environment, the LLM can leak them. If they're in the prompt, users can extract them. Building a system where the agent can use credentials without seeing them is a real architecture problem.

Hallucination detection, user isolation, anti-prompt-injection, scheduling, proactive messaging, multi-channel deployment. The list keeps going.

None of this is your moat

Here's the thing. Every single item above is identical whether you're building a customer support bot, a sales assistant, a production engineer, a task manager, or a therapy companion. The agent infrastructure layer doesn't change based on your vertical. It's all undifferentiated heavy lifting.

Your moat is the domain expertise. The specific tools your agent calls. The backend logic. The prompt that encodes your product's unique behavior. The integrations with your specific APIs and databases.

That's what prompt2bot is for. You focus on what makes your agent yours. We handle the platform it runs on.

You write the prompt. You define the tools. You build the backend they call. prompt2bot handles the WhatsApp sessions, the voice transcription, the file processing, the RAG pipeline, the VM orchestration, the secret encryption, the hallucination checking, the user isolation, the scheduling, the multi-channel deployment, and the thousand other things you'd spend months building yourself.

Migration risk is near zero

This is the part that makes the decision easy. Your investment in prompt2bot is the prompt and the tools. Both are plain text. The prompt is natural language describing your agent's behavior. The tools are API endpoints on your own servers.

If you ever outgrow the platform, or want to self-host, or switch to a different agent framework, you take your prompt and your tool specs with you. They're not locked into our format. A prompt that works on prompt2bot works on any LLM. Tool definitions are just OpenAPI-style schemas pointing at your own URLs.

For the web channel, Alice & Bot is fully open source. The chat widget, the E2EE protocol, the whole thing. If you switch to a different agent backend, you keep the same web UI. Swap out the webhook endpoint and you're done.

The months of work we save you on infrastructure, you never need to redo. The days of work you put into your prompt and tools, you never lose.

The math

This isn't about skill. An experienced dev team can build all of this. They'll also spend months doing it, and then maintain it forever. Every new channel, every new media type, every security patch, every WhatsApp API change is more work on top. That's months of senior engineering time not spent on the actual product.

Using prompt2bot: you're live in a day. Your agent talks to users on WhatsApp, Telegram, and the web. It handles voice, files, images. It has a VM. It has secure secrets. It detects hallucinations. It scales.

You didn't start a company to build chat infrastructure. Ship the product, not the plumbing.

← All posts