The levels of automation

2026-04-25

by Uri Walevski

Self-driving has levels. SAE defined them from 0 to 5 and now everyone roughly knows what they mean. Level 1: cruise control, you're driving. Level 2: the car steers, you supervise. Level 4: the car drives, you take a nap.

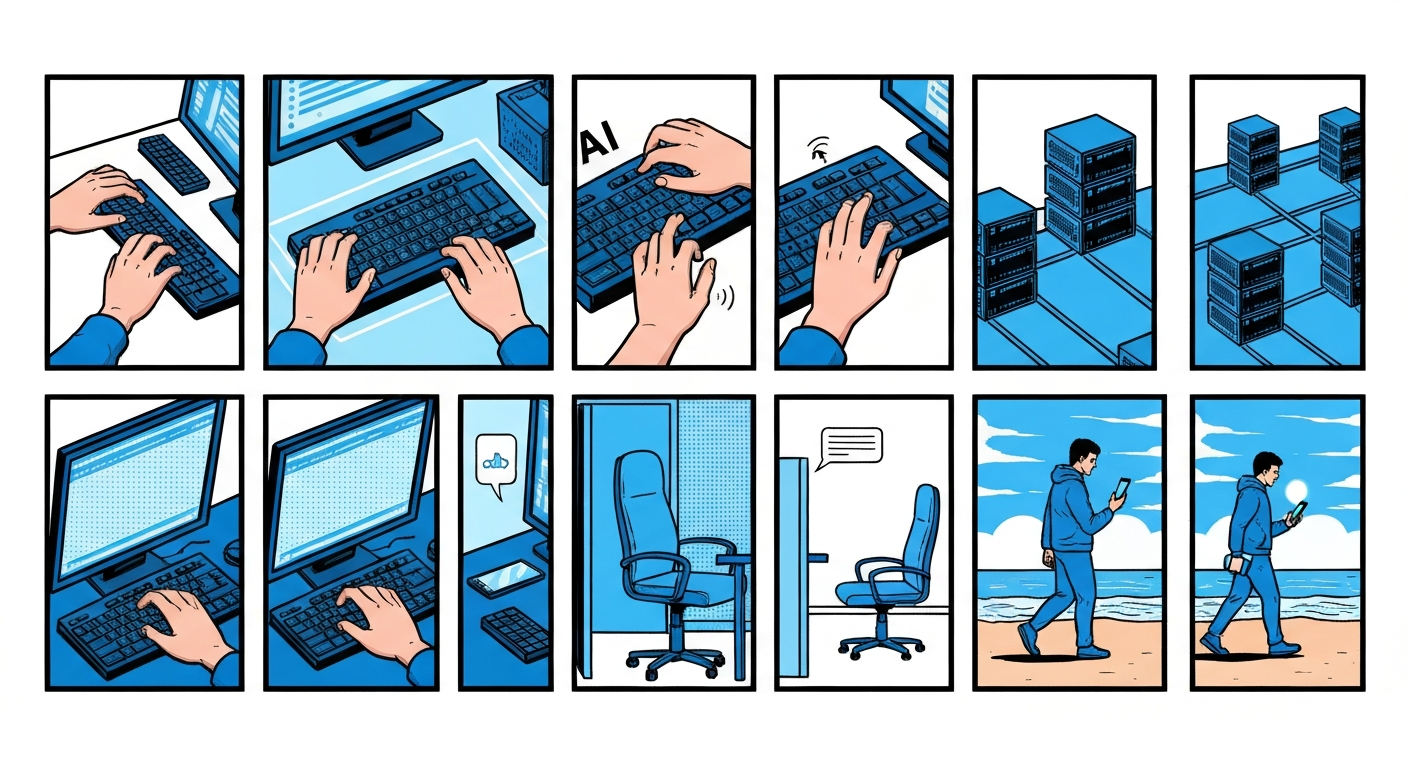

AI automation has the same ladder. We just haven't named the rungs.

I think about it in four levels, plus the baseline where there's no AI at all.

Level 0: no AI. Scripts, cron jobs, CI pipelines. The procedure is known, the steps are deterministic. You write it once, it runs the same way every time. This is most software and it works fine. If you know what needs to happen, just code it.

Level 1: AI in the loop. Copilot in your IDE. A model suggesting completions, spotting bugs, proposing refactors. You're driving. The AI is in the passenger seat pointing things out. The keyboard is in your hands. You decide what to accept.

This level covers most LLM pipelines. Text in, text out. The model classifies, extracts, summarizes. Your code moves the result to the next step. The LLM is just a function with better judgment than a regex. No autonomy, no multi-step reasoning. You control the flow.

The common mistake is calling this an agent. It's not. It's a very smart function.

Level 2: AI on the computer. Claude Code, OpenClaw, coding agents that have a terminal and a filesystem. The AI drives now. It opens files, writes code, runs tests, fixes failures. But you're in the driver's seat with your hand hovering over the wheel. You read what it's doing, you intervene when it takes a wrong turn. The AI has the keys but you haven't left the car.

Level 2 agents can do real work. They debug issues, deploy services, refactor codebases. But they run on your machine, using your terminal, your credentials. If something breaks you're there to ctrl-c. The human is still the safety net.

The problem with Level 2 is it doesn't scale to a product. You can't have a hundred users each watching their own agent. Human supervision is the bottleneck.

Level 3: AI with its own computer. The agent has its own VM. Its own filesystem, its own terminal, its own network. It doesn't run on your machine. It runs on a server and you talk to it through chat, the same way you'd message a human engineer on Slack.

You give it a goal and walk away. It figures out the rest. It writes code, provisions infrastructure, installs dependencies, pushes to GitHub. It messages you when it's done, or when it's stuck, or when it needs a decision you didn't anticipate. You're not watching it work. You're doing something else and getting updates.

This is where the relationship changes. At Level 1, the AI is a tool. At Level 2, the AI is a tool you supervise closely. At Level 3, the AI is a team member. It has its own computer, its own context, its own ongoing work. You delegate. You don't supervise. Crucially, at this level, one human works with one or more agents, but the agents rarely interact amongst themselves.

The infrastructure changes too. The AI's computer is ephemeral. It spins up when there's work, idles or disappears when there isn't. Secrets are injected at the network layer so the model never sees plaintext credentials. The AI can't nuke its own runtime because its runtime is disposable. The control plane is separate from the compute plane.

This is how we built prompt2bot. The agent lives in a managed environment. When it needs a computer, it gets one. Secrets are never visible to the model but work in the shell. When the work is done the machine goes away.

Most agent platforms today are Level 2. They give the AI a computer but keep the human in the loop as a supervisor, because the computer is shared infrastructure and someone needs to make sure nothing catches fire. The jump to Level 3 means treating the AI less like a process you monitor and more like a colleague you talk to.

Level 4: a team of agents. Multiple agents with their own computers, collaborating toward a high-level goal. This is where agents have hierarchies or engage in direct conversations with each other. You're not giving one agent a task. You're setting direction for a small organization. One agent handles infrastructure, another writes code, a third reviews it, a fourth deploys and monitors. They coordinate among themselves, split work, catch each other's mistakes.

The human's role shifts again. You're not delegating to a team member. You're setting the vision for a team. The agents figure out division of labor, sequencing, and dependencies. You check in occasionally, like a manager getting updates from a lead.

We're not here yet. The coordination problem between agents is genuinely hard. When two agents disagree about an approach, who arbitrates? When one agent's work depends on another's output that's still in progress, what's the protocol? These are the same problems human teams face, and we don't have good answers yet for AI teams either.

Right now we're at Level 3. A single agent on its own computer, doing real work, while you do something else. That's the state of the art. Level 4 is coming, but not today.

← All posts